Make believe is at the heart of the Disney magic, and with a new short film Make Believe, Disney Studios took a significant step into the future of “computational cinematography.” A 3D live-action film about a young girl who discovers the power of imagination found in studying science, Make Believe was shot using Disney’s 3D hybrid rig, created out of a unique partnership between Disney, camera/lighting manufacturer ARRI and German R&D center Fraunhofer HHI.

Computational cinematography is based on the idea that, by shooting with multiple cameras, cinematographers can capture enough information to create the images they want in post, manipulating resolution, frame rate and depth information. At Disney, the new camera system tackles 3D productions, with the attempt to streamline a laborious, time-consuming process.

Making a 3D film, via native cinematography or post production via the 2D-to-3D conversion process, isn’t a simple task. Native 3D cinematography – whether via beam splitters or side-by-side rigs – creates a range of issues with the imagery (vertical misalignment, keystone with convergence, differential lens flares, focus and zoom lens tracking) and requires bulky, heavy rigs and larger crews. Productions also require on-set 3D viewing and an on-site stereographer and inter-axial adjustments.

The second and most commonly used method, converting imagery from 2D to 3D requires huge teams of rotoscopers who painstakingly isolate each image in each frame by hand.

“We thought, there’s got to be a better mousetrap,” said Disney Vice President of Production Services Howard Lukk who directed Make Believe. He was attracted to the nascent field of computational cinematography (also known as CompCine), which integrates computer algorithms with image capture. Several cameras capture the object or scene from multiple angles, and the resulting image information can be manipulated in post production. That includes segmentation (isolating objects) without rotoscoping, as well as tweaking attributes such as lens, lighting and camera moves. The goal, further down the line, is auto-segmentation.

How does it work to create 3D imagery? “With CompCine, you capture light fields and then build the image in post,” Lukk explains. “Because we know the difference between lenses among the cameras, we can triangulate and come up with the data we need to capture depth maps to create 3D images.”

This is a technique that has been used for some time by visual effects artists and, in fact, Lukk was inspired by an ILM demonstration of using multiple cameras to create 3D pre -visualizations for Pirates of the Caribbean. He also read an article in a SMPTE publication about a trifocal rig that had been built some years past. Putting these two pieces of information together, Lukk believed it was possible to use a tri-focal camera that could help create 3D images –without rotoscoping — out of live action footage.

With Disney on board, Lukk found other industry experts engaged in complementary research. Fraunhofer Society is a German research organization with 67 institutes each one of which is devoted to a specific applied science. HHI, the Heinrich Hertz Institute for Telecommunications, had already done research on computational cinematography and readily joined the team, as did German camera and lighting manufacturer ARRI. “This was a very unusual alliance,” says Lukk. “It came about because I was seeking the best of technology and this was where the peak of the research was.”

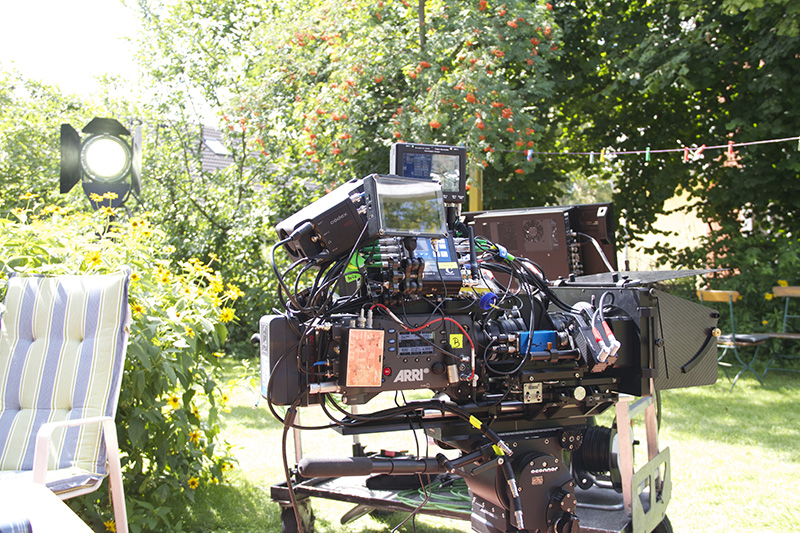

After many months of hard work, the team came up with two prototype camera systems: ARRI constructed a rig for dollies and another for Steadicam. The central camera of the tri-focal system is ARRI’s popular Alexa. The secondary cameras, on either side of the Alexa, were Indiecams, with a 2K Bayer output, all connected to Convergent Design’s Gemini RAW Recorder. Lukk and his partners also worked on algorithms for depth maps, achieved by aligning the geometry of the primary and secondary cameras.

Generation 2.5 added zoom lenses on the secondary camera; each new version was tested extensively “The system has gone through several iterations,” says Lukk. “But our first proof of concept cameras already showed a lot of promise.”

The goal was to test hybrid 3D in a real production, so Lukk directed Make Believe, a 10-minute movie about a young girl and her imagination. Working with Disney, ARRI developed a more streamlined practical rig, and pre-production began for Make Believe in the suburbs of Berlin in August 2013. The movie was shot on location in three days, with a German crew and cast; the movie uses very little dialogue. In one crucial scene, the Steadicam operator rotates the rig holding three cameras around the lead actress, moving within very tight confines. The rig and cameras worked perfectly during production.

The goal was to test hybrid 3D in a real production, so Lukk directed Make Believe, a 10-minute movie about a young girl and her imagination. Working with Disney, ARRI developed a more streamlined practical rig, and pre-production began for Make Believe in the suburbs of Berlin in August 2013. The movie was shot on location in three days, with a German crew and cast; the movie uses very little dialogue. In one crucial scene, the Steadicam operator rotates the rig holding three cameras around the lead actress, moving within very tight confines. The rig and cameras worked perfectly during production.

In post, StereoD’s team extracted the information required to separate objects from the image with as little rotoscoping as possible, and then converted the movie to 3D. Keeping close records, Lukk calculated just how much time and money the movie saved from the more traditional ways of creating 3D movies. “We wanted to see how much rotoscoping we could eliminate,” he says. “We figure it has the potential to save 30 percent using the current generation secondary cameras.” Given the substantial costs of converting and native 3D cinematography, a cost savings is substantial.

This early stage production also highlighted the areas that the team needs to improve. “We did get the depth maps, “ says Lukk. “But the secondary cameras needed a lot more dynamic range. In dark scenes, the ARRI Alexa could pull out information, but the secondary cameras went to noise.”

Disney and its partners are already at work creating Generation 4 of the pioneering hybrid 3D camera system. The two areas of R&D currently are backend algorithms and next generation cameras. Codex has introduced Action CAM, a very small camera that integrates recording, thus eliminating the weight of an attached recorder. The Action CAM also synchronizes with the ARI Alexa signal and offers better dynamic range, adds Lukk.

With regard to algorithms, Lukk reports that the group is moving towards integrating existing algorithms into The Foundry’s Nuke compositing software. “Once that’s done, the workflow for the entire pipeline will be there,” he says.

By end of Summer 2014, Generation 4 of the hybrid 3D system will be operational. Lukk looks forward to how the system may evolve to become even more capable. “It has the potential to eliminate blue/green screen altogether,” he says. “As it is now, it’s the first step into computational cinematography. We can do depth, refocusing, add resolution and high dynamic range. The technique will soon increase to add high frame rates.”

With 4th generation cameras that offer global shutter, improved dynamic range and resolution for the secondary cameras and a simplified sync system and better recording system, hybrid 3D is nearing complete viability as a system. “We’re also looking at live stereo preview,” adds Lukk. “And, for the segmentation process, the goal is to improve color matching and rectification process; improve temporal filters that will hold edges; and search for the best depth/disparity map.”

“Computational cinematography is where things are going,” he enthuses. “Instead of one massive camera, filmmakers can use a group of off-the-shelf small cameras and then use computational algorithms to put the images all together. Although it’s still five to ten years out for computational cinematography to be fully functional, this hybrid 3D system is the first step. By the end of 2014, it will be a workable system.”

In the meantime, Make Believe, the first artistic fruit of this unusual collaboration, was recently finished. Lukk worked with composer Andrea Dimity to make use of Dolby’s Atmos. “She was fearless,” says Lukk. “She liked the idea of a traveling score, making the instruments fly around. The score really added to Make Believe, especially the stereoscopic aspect.”

Make Believe is on schedule to be shown at IBC in Amsterdam for European audiences.